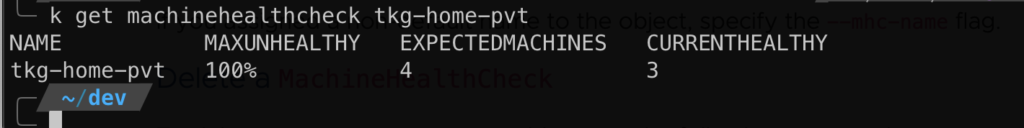

With Tanzu Kubernetes Grid Cluster API implements Machine Health-Check that provides node health monitoring and node auto-repair for Tanzu Kubernetes clusters.

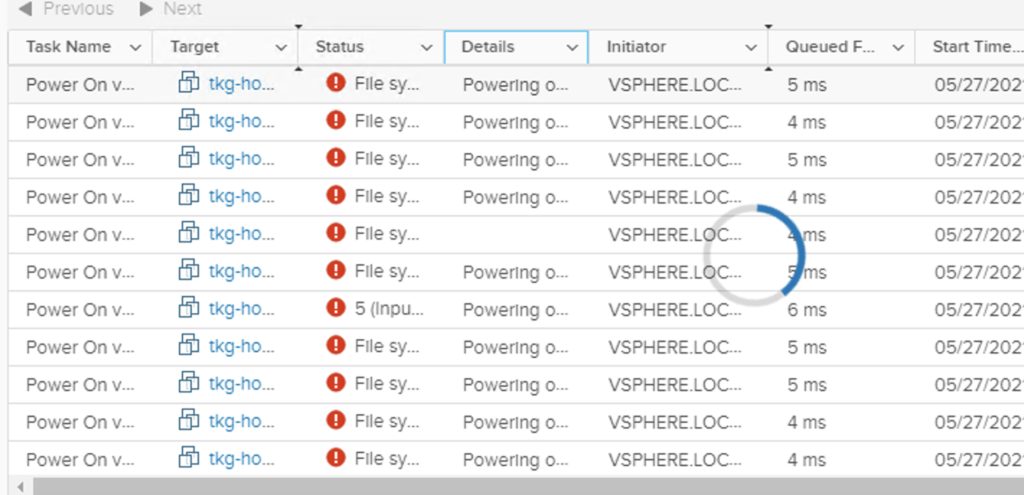

In action, what happens is it automatically consolidates the desired state with regards to node configuration in the event of failure. Saw this in action when power wen-out and caused one my worker nodes to be corrupted.

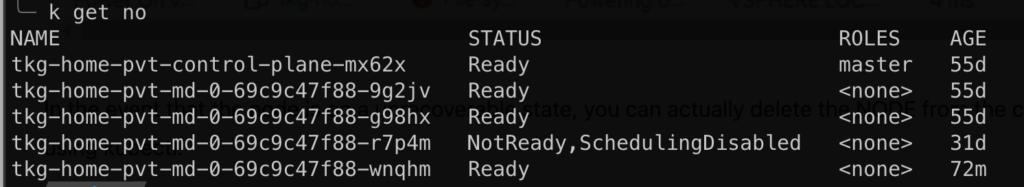

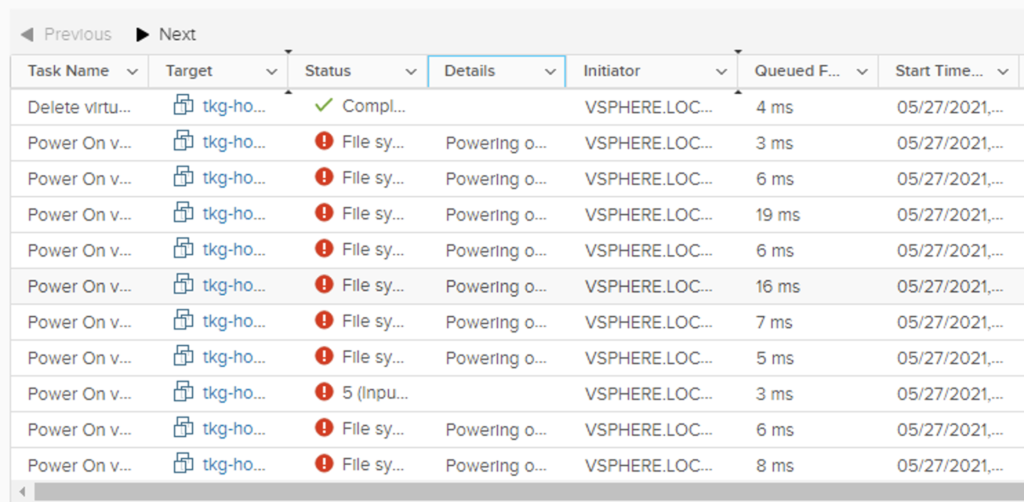

As a response, the cluster-api provisioned a new node to reach the desired state of the kubernetes cluster. At the same time, it would try to recover the failed node by powering on the VM.

Here is the kubernetes cluster status after Tanzu automatically provisioned a new node to compensate for the failed node.

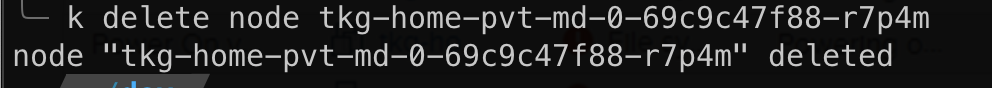

In my case, the failed node is unrecoverable so the only way to move clean it up is to remove it. To do this, issue the following:

kubectl delete node <node name>

After the operation, ClusterAPI will also delete the VM object from vCenter.

Hope that helps.

Enjoy!